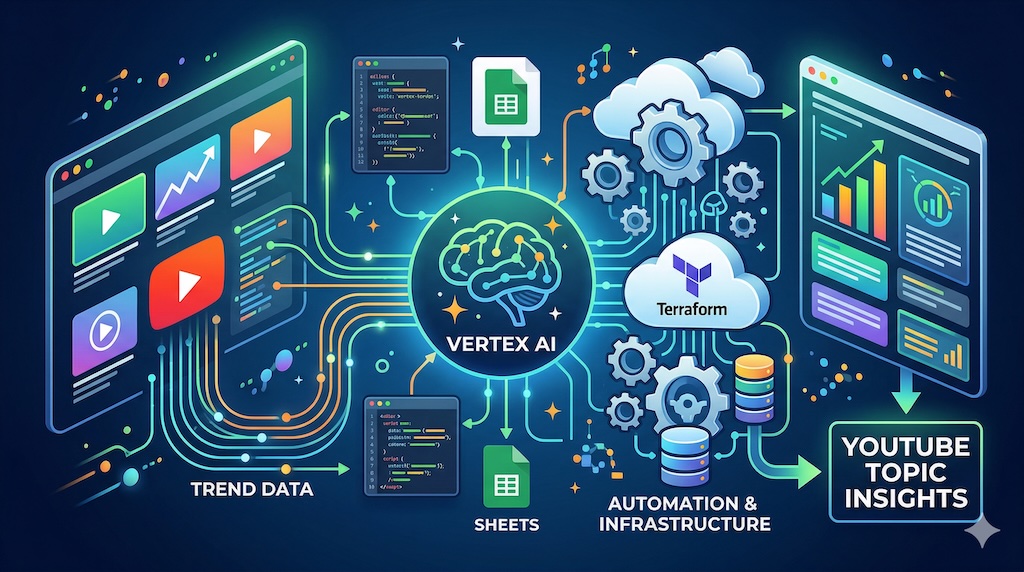

Understanding and spotting trends in organic YouTube traffic based on specific queries can often be a manual and time-consuming process. To solve this, the Google Marketing Solutions team has published an open-source repository for a tool called YouTube Topic Insights. Developed by Google Customer Solutions Engineer Francesco Landolina, the project transforms raw video data into actionable summaries by combining the data-gathering capabilities of the YouTube Data API with the video and language understanding of Gemini models.

For Google Apps Script developers interested in building scalable tools, this repository is a fantastic example of using Vertex AI at scale. It orchestrates complex, long-running processes directly from a Google Sheet and pairs them with Vertex AI batch prediction. In this post we will highlight some interesting infrastructure setup and the pattern used to bypass common Apps Script execution limits.

Project architecture and setup

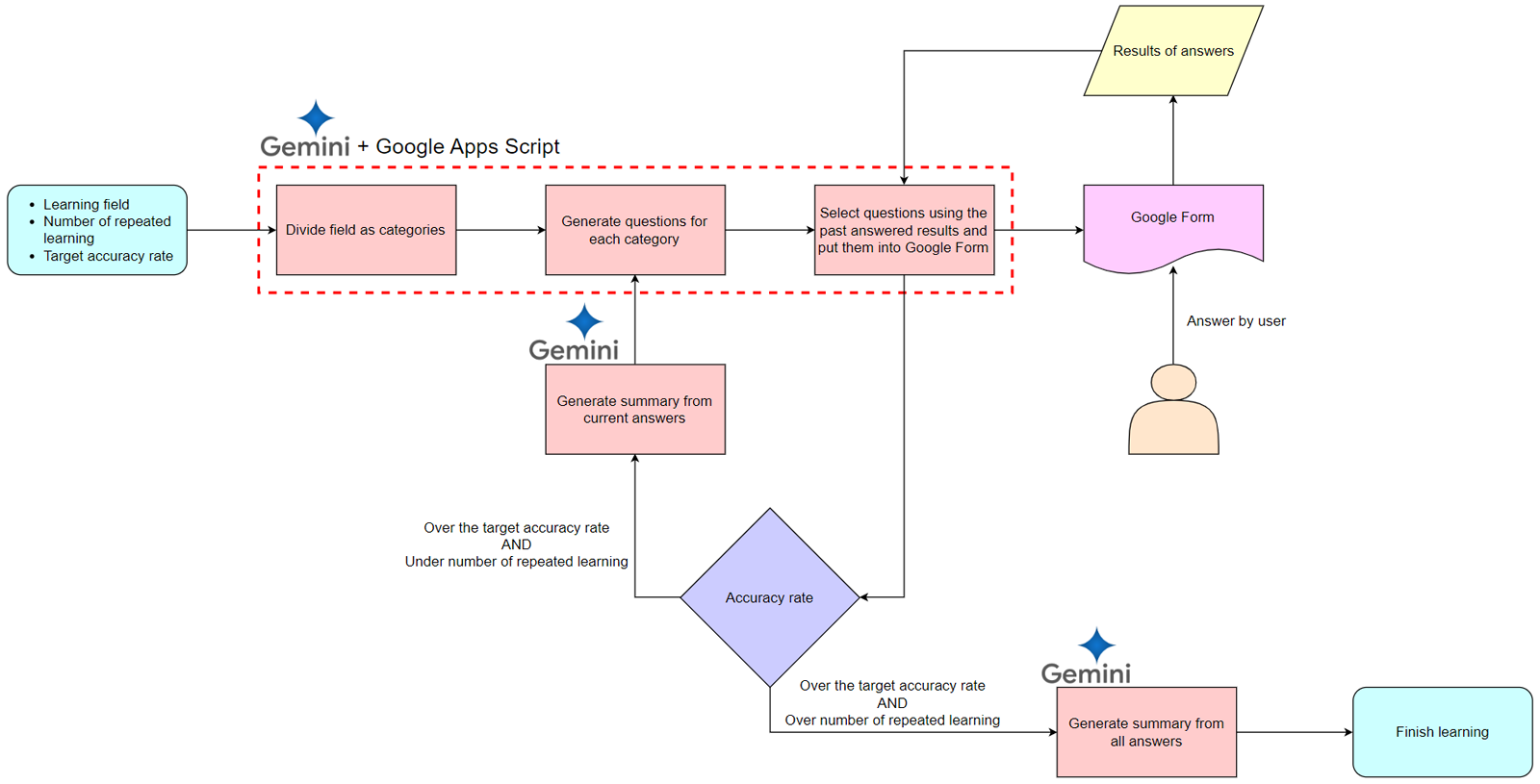

The solution is divided into two main components that make it easier to setup and process the data:

- GCP Infrastructure as Code: The necessary cloud environment is defined using Terraform infrastructure as code. This makes the deployment easier by enabling required APIs like Vertex AI and the YouTube Data API. While the Terraform script attempts to set up the OAuth Consent Screen using the

google_iap_brandresource, you will need to configure the OAuth Consent Screen manually within the Google Cloud Console as the official Terraform documentation notes that the underlying IAP OAuth Admin APIs were permanently shut down on March 19, 2026. - Google Apps Script: The brain of the operation lives within a Google Sheet. It calls the YouTube API to find videos, prepares and submits analysis jobs to Vertex AI batch prediction, fetches the completed results, and writes the human-readable insights back into the spreadsheet interface.

For developers setting this up, the repository includes a Quick Start template. The script automatically handles the initialisation of the spreadsheet structure when first opened, creating the necessary configuration and logging sheets in a safe, non-destructive way.

Overcoming the execution limit with Vertex AI batch prediction

As you can imagine, analysing hundreds of videos using a LLM is going to be a compute-heavy task. If you attempt to process these synchronously in a simple loop, you will quickly hit the execution limit for Google Apps Script.

To get around this, the solution uses Vertex AI batch prediction (see Batch inference with Gemini). Instead of waiting for Gemini to process each video, the script initiates an asynchronous batch job and uses a chained-trigger system to poll for completion. Once the batch job completes, the script retrieves this context, parses the JSON response from Gemini, and writes the summarised data back to the Google Sheet.

Summary

The YouTube Topic Insights solution is an excellent example of how to build complex applications using Vertex AI Batch Prediction. It provides practical solutions for managing asynchronous tasks, interacting with advanced Vertex AI features.

If you are interested in exploring the code further or setting up the tool for your own research, you can find the complete source code and deployment instructions in the YouTube Topic Insights GitHub repository.

Source: YouTube Topic Insights: Automated YouTube Trend Analysis with Gemini AI

Member of Google Developers Experts Program for Google Workspace (Google Apps Script) and interested in supporting Google Workspace Devs.