We have all been there: You build a beautiful, automated dashboard in Google Sheets. It’s dynamic, it’s collaborative, and it’s perfectly integrated with your data sources. Then, the stakeholder request comes in: “Can you email this to me as an Excel file every Monday morning?”

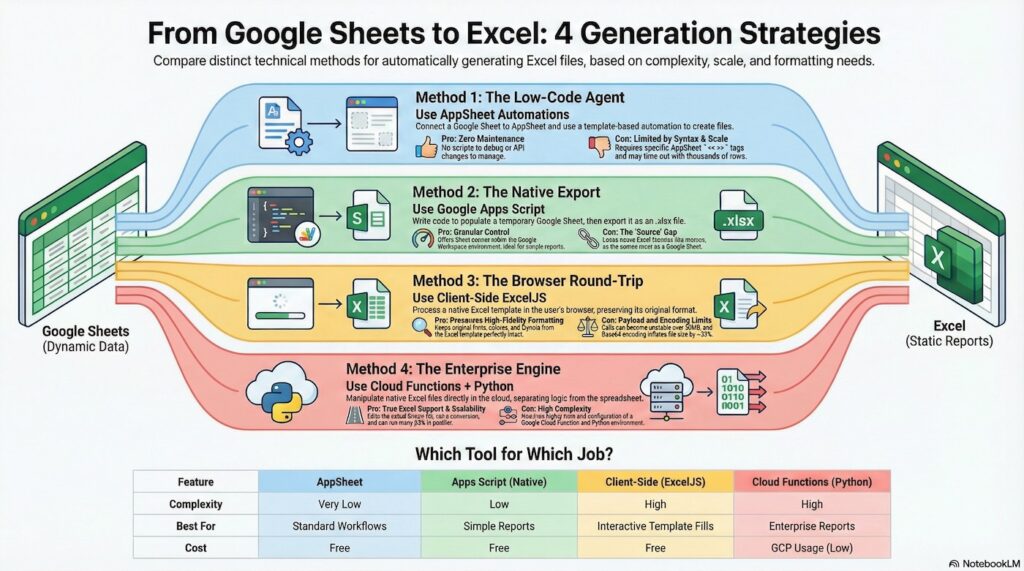

For Google Workspace developers, the “Excel Requirement” is often a stumbling block. While Google Sheets is powerful, the bridge to Excel can be shaky. In this post, I’m going to explore four architectural approaches to solving this problem. If you would like to play along here is the Google Sheet Invoice Template and the Excel Invoice Template.

1. The Low-Code Agent (AppSheet Automation)

Before you write a single line of code, you should consider if the platform can do the heavy lifting for you. Since AppSheet is now a core part of Google Workspace, it offers a native “No-Code” way to generate Excel files that is robust, scheduled, and template-based.

The Strategy

Instead of writing a script to fetch data and build a file, you connect your Google Sheet to an AppSheet app. You then configure an Automation that triggers on a schedule or a data change.

The Workflow:

- Trigger: A new row is added to your “Invoices” sheet (or a weekly schedule fires).

- Task: AppSheet runs a “Create a new file” task.

- Template: It reads a simplified Excel template stored in Drive, replaces tags like

<<[Qty]>>with actual data, and generates the file. - Delivery: The file is saved to Drive or emailed directly to the client.

Why This Wins

- Zero Maintenance: No scripts to debug, no OAuth tokens to manage, and no breaking changes when APIs update.

- Native Templates: It supports complex Excel formatting out of the box.

The Limitation

- Syntax: You must use AppSheet’s specific templating syntax (

<< >>) inside your Excel file. - Scaling: Generating reports with thousands of line items can hit automation timeout limits.

2. The Native Export (Apps Script)

If you need more granular control or don’t want to spin up an AppSheet app, the most common starting point is attempting to do everything within Apps Script. You create a temporary Google Sheet, fill it with data, and then export it using the Drive API.

The Code

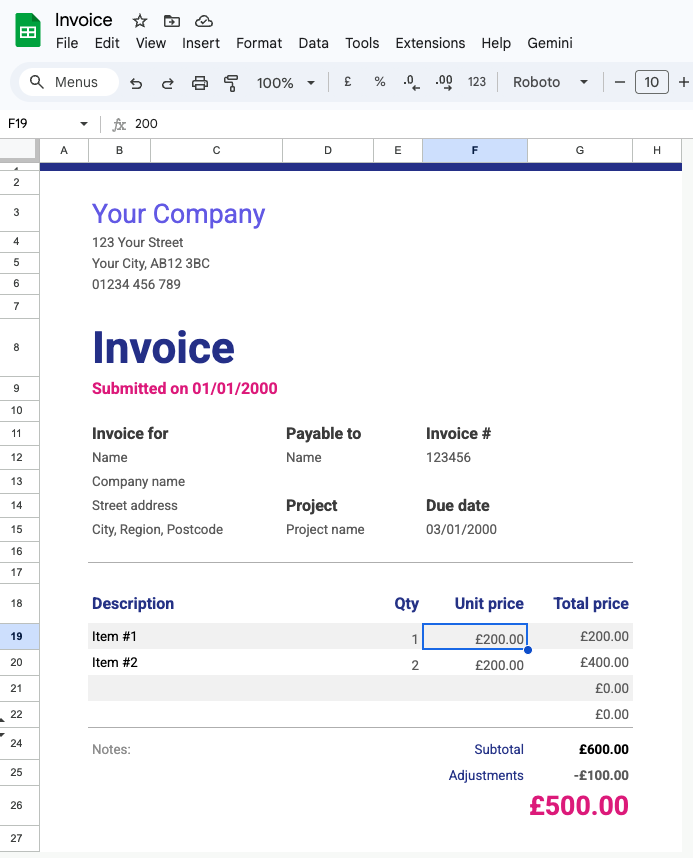

In this scenario, we assume you have a Google Sheet version of your Invoice template. We first write the specific line items to the Sheet, then export it.

function generateInvoiceExport() {

const ss = SpreadsheetApp.getActiveSpreadsheet();

const sheet = ss.getSheetByName('InvoiceTemplate');

// 1. Prepare Invoice Data

const invoiceData = [

{ desc: "Web Development - Phase 1", qty: 40, unit: 100 },

{ desc: "Server Hosting (Annual)", qty: 1, unit: 250 },

{ desc: "Domain Registration", qty: 2, unit: 15 },

{ desc: "Maintenance Retainer", qty: 10, unit: 85 }

];

// 2. Map data to the Sheet structure (Cols B, C, D, E, F)

// B=Desc, E=Qty, F=Unit. (C and D are skipped/empty)

const rows = invoiceData.map(item => [item.desc, "", "", item.qty, item.unit]);

// 3. Write data to the Google Sheet

// Matches Template: Start at Row 19, Column B (2)

if (rows.length > 0) {

// Corrected: ensure the number of columns (5) matches your data structure

sheet.getRange(19, 2, rows.length, 5).setValues(rows);

}

// 4. Construct the export URL for the populated sheet

const fileName = `Invoice_${new Date().toISOString().slice(0,10)}.xlsx`;

const url = `https://docs.google.com/spreadsheets/d/${ss.getId()}/export?format=xlsx&gid=${sheet.getSheetId()}`;

// 5. Fetch and Save

const params = {

method: "GET",

headers: { "Authorization": `Bearer ${ScriptApp.getOAuthToken()}` },

muteHttpExceptions: true

};

const response = UrlFetchApp.fetch(url, params);

if (response.getResponseCode() === 200) {

const blob = response.getBlob().setName(fileName);

const file = DriveApp.createFile(blob);

console.log(`Success: Invoice saved to Drive (ID: ${file.getId()})`);

} else {

console.log(`Error: ${response.getContentText()}`);

}

};

The Limitations

- Speed & Scale: The overhead of creating a temporary sheet and exporting it adds up. Generating 50 invoices in a loop will take a long time.

- The “Source” Gap: Your source material must be a Google Sheet, meaning you lose Excel-specific features like Power Query or VBA macros from your template.

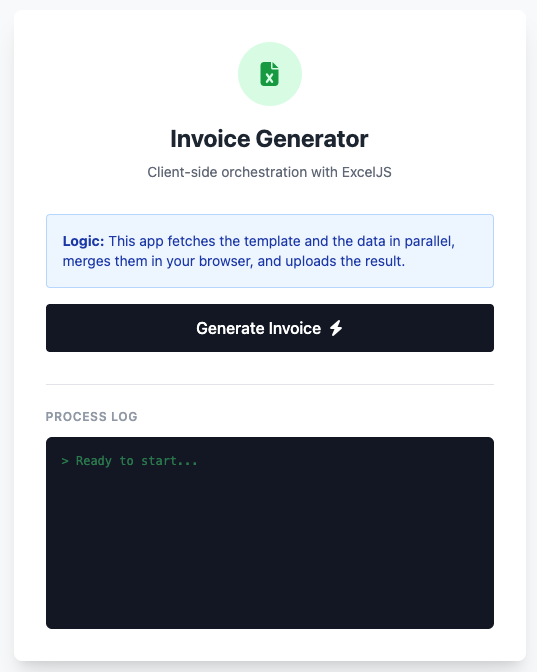

3. The Browser Round-Trip (ExcelJS)

If you need to maintain stricter fidelity to an existing Excel template (preserving fonts, colors, and layouts) and are interested in speed, you can use the “Round Trip” technique.

This method uses the user’s browser as a processing engine. You fetch the Invoice.xlsx template from Drive, process it client-side with ExcelJS, and send the result back.

The Strategy

- Parallel Fetching (Client): The browser fires concurrent requests to Apps Script to fetch the template (Base64) and invoice data.

- Client-Side Processing: ExcelJS loads the template, injects data into specific cells (preserving layout), and recompiles the file.

- Upload: The new file is encoded and sent back to Drive.

The Code

This snippet focuses on the core orchestration logic. For the full UI setup and helper functions, see the linked project.

/* --- CLIENT SIDE (Core Logic) --- */

async function startGeneration() {

// 1. Parallel Fetching

// Fire both requests at once to speed up the wait time

const [templateResponse, invoiceData] = await Promise.all([

runGoogleScript('getTemplateBase64', null),

runGoogleScript('getInvoiceData')

]);

// 2. Load into ExcelJS

const workbook = new ExcelJS.Workbook();

const binaryString = atob(templateResponse.base64);

const bytes = new Uint8Array(binaryString.length);

for (let i = 0; i < binaryString.length; i++) { bytes[i] = binaryString.charCodeAt(i); } await workbook.xlsx.load(bytes.buffer); // 3. Modify the Sheet const sheet = workbook.worksheets[0]; let currentRow = 19; // Starting at Row 19 based on template invoiceData.forEach((item, index) => {

// Ensure we do not write past the visual template limit (e.g. Row 22)

if (currentRow + index > 22) return;

const row = sheet.getRow(currentRow + index);

// Inject data into specific cells (B, E, F)

row.getCell(2).value = item.desc;

row.getCell(5).value = item.qty;

row.getCell(6).value = item.unit;

// ExcelJS preserves the existing styles of these cells!

row.commit();

});

// 4. Export & Save

const outBuffer = await workbook.xlsx.writeBuffer();

// Convert buffer to base64 (simplified for brevity)

let binary = '';

const outBytes = new Uint8Array(outBuffer);

for (let i = 0; i < outBytes.length; i++) {

binary += String.fromCharCode(outBytes[i]);

}

const outBase64 = btoa(binary);

// Upload back to Drive

await runGoogleScript('saveProcessedFile', outBase64, `Invoice_${Date.now()}.xlsx`);

}

/* --- SERVER SIDE (Apps Script) --- */

function getTemplateBase64(templateId) {

const file = DriveApp.getFileById(templateId);

return {

base64: Utilities.base64Encode(file.getBlob().getBytes())

};

}

function saveProcessedFile(base64Content, fileName) {

const blob = Utilities.newBlob(

Utilities.base64Decode(base64Content),

MimeType.MICROSOFT_EXCEL,

fileName

);

return { url: DriveApp.createFile(blob).getUrl() };

}

The Trade-off

- Payload Limits:

google.script.runcalls can become unstable when the payload exceeds 50MB. - Base64 Inflation: Remember that Base64 encoding increases file size by ~33%.

4. The Enterprise Engine (Cloud Functions + Python)

For robust, high-fidelity reporting, we need to leave the Google Sheets conversion pipeline behind. By using Google Cloud Functions with Python, we can manipulate native Excel files directly using libraries like openpyxl.

The “User Identity” Pattern

Instead of managing complex Service Account permissions, this solution uses the User’s Identity to perform Drive operations.

- Apps Script: Generates an OIDC ID Token (for identity), using

getIdentityToken()) and an OAuth Access Token (for Drive permissions) withgetOAuthToken(). - Cloud Function: Verifies the ID Token and uses the Access Token to initialise the Drive API client as the user.

Setup Instructions

1. Google Cloud Function (Python)

- Deploy the Function:

- Source:

cloud-fn.py - Runtime: Python 3.x

- Entry point:

generate_excel_report - Requirements:

functions-framework,google-auth,google-api-python-client,openpyxl

- Source:

- Configure Environment:

- Open

cloud-fn.pyand updateAPPS_SCRIPT_CLIENT_IDwith your Apps Script’s OAuth Client ID.

Note: You can find this ID by running thelogClientId()helper function inCode.js.

- Open

2. Google Apps Script (Code.js)

- Update Manifest (

appsscript.json): Add thehttps://www.googleapis.com/auth/userinfo.emailscope. - Configuration: Update

YOUR_CLOUD_FUNCTION_URLincallInvoiceEngine()with your deployed function URL.

The Code

Google Apps Script (Code.js)

function callInvoiceEngine() {

const url = "YOUR_CLOUD_FUNCTION_URL";

// 1. Get Tokens

const accessToken = ScriptApp.getOAuthToken();

const idToken = ScriptApp.getIdentityToken(); // Requires 'openid' scope in manifest

// --- LOGGING FOR LOCAL TESTING ---

console.log("--- CREDENTIALS FOR LOCAL TESTING ---");

console.log("ID Token:", idToken);

console.log("Access Token:", accessToken);

console.log("-------------------------------------");

const payload = {

templateId: "ID_OF_TEMPLATE_FILE",

folderId: "ID_OF_DESTINATION_FOLDER",

// Matches the same dataset used in our other examples

data: [

{ desc: "Web Development - Phase 1", qty: 40, unit: 100 },

{ desc: "Server Hosting (Annual)", qty: 1, unit: 250},

{ desc: "Domain Registration", qty: 2, unit: 15},

{ desc: "Maintenance Retainer", qty: 10, unit: 85}

],

// The Magic Key: Passing the active user's Access Token for Drive operations

userToken: accessToken

};

const options = {

method: "post",

contentType: "application/json",

headers: {

"Authorization": `Bearer ${idToken}`

},

payload: JSON.stringify(payload)

};

UrlFetchApp.fetch(url, options);

}

function logClientId() {

// Helper to find the Project's OAuth Client ID

var token = ScriptApp.getOAuthToken();

var url = 'https://www.googleapis.com/oauth2/v1/tokeninfo?access_token=' + token;

var response = UrlFetchApp.fetch(url);

var data = JSON.parse(response.getContentText());

console.log('Client ID:', data.issued_to);

}

Pro Tip: Batching Requests

If you need to generate 50 invoices at once, don’t loop through them one by one. Use UrlFetchApp.fetchAll(requests) to fire off parallel requests to your Cloud Function. Since the heavy lifting happens in the cloud, Apps Script just acts as the orchestrator.

Google Apps Script (appsscript.json)

{

"oauthScopes": [

"https://www.googleapis.com/auth/drive",

"https://www.googleapis.com/auth/script.external_request",

"https://www.googleapis.com/auth/userinfo.email"

]

}

Cloud Function (cloud-fn.py)

import requests

import json

def run_test():

print("--- Local Cloud Function Tester ---")

print("Ensure you are running: functions-framework --target=generate_excel_report --source=cloud-fn.py --port=8080\n")

# 1. Gather Tokens & IDs

print("Paste your Apps Script Tokens (use Logger.log in GAS):")

id_token = input("1. ID Token (ScriptApp.getIdentityToken()): ").strip()

if not id_token: return

access_token = input("2. Access Token (ScriptApp.getOAuthToken()): ").strip()

if not access_token: return

template_id = input("3. Google Sheet/Excel Template ID: ").strip()

folder_id = input("4. Destination Folder ID: ").strip()

# 2. Prepare Payload

payload = {

"templateId": template_id,

"folderId": folder_id,

"data": [

{ "desc": "Local Test Item 1", "qty": 1, "unit": 10 },

{ "desc": "Local Test Item 2", "qty": 5, "unit": 20 }

],

"userToken": access_token

}

headers = {

"Authorization": f"Bearer {id_token}",

"Content-Type": "application/json"

}

# 3. Send Request

url = "http://localhost:8080"

print(f"\nSending POST request to {url}...")

try:

response = requests.post(url, json=payload, headers=headers)

print(f"\nStatus Code: {response.status_code}")

print(f"Response Body: {response.text}")

except requests.exceptions.ConnectionError:

print(f"\n[!] Could not connect to {url}.")

print("Is the functions-framework running?")

if __name__ == "__main__":

run_test()

Local Test Script (test_request.py)

Before deploying, you can test the entire flow locally to ensure your tokens and permissions are working correctly.

- Start the Function:

functions-framework --target=generate_excel_report --source=cloud-fn.py --port=8080 - Run the Tester:

python3 test_request.py(Paste tokens from Apps Script logs when prompted).

import requests

import json

def run_test():

print("--- Local Cloud Function Tester ---")

print("Ensure you are running: functions-framework --target=generate_excel_report --source=cloud-fn.py --port=8080\n")

# 1. Gather Tokens & IDs

print("Paste your Apps Script Tokens (use Logger.log in GAS):")

id_token = input("1. ID Token (ScriptApp.getIdentityToken()): ").strip()

if not id_token: return

access_token = input("2. Access Token (ScriptApp.getOAuthToken()): ").strip()

if not access_token: return

template_id = input("3. Google Sheet/Excel Template ID: ").strip()

folder_id = input("4. Destination Folder ID: ").strip()

# 2. Prepare Payload

payload = {

"templateId": template_id,

"folderId": folder_id,

"data": [

{ "desc": "Local Test Item 1", "qty": 1, "unit": 10 },

{ "desc": "Local Test Item 2", "qty": 5, "unit": 20 }

],

"userToken": access_token

}

headers = {

"Authorization": f"Bearer {id_token}",

"Content-Type": "application/json"

}

# 3. Send Request

url = "http://localhost:8080"

print(f"\nSending POST request to {url}...")

try:

response = requests.post(url, json=payload, headers=headers)

print(f"\nStatus Code: {response.status_code}")

print(f"Response Body: {response.text}")

except requests.exceptions.ConnectionError:

print(f"\n[!] Could not connect to {url}.")

print("Is the functions-framework running?")

if __name__ == "__main__":

run_test()

Why This Wins

- True Excel Support: You are editing the actual binary file, not a conversion.

- Separation of Concerns: The heavy lifting is done in the cloud, not in the browser or the Apps Script runtime.

Summary: Which Tool for Which Job?

| Feature | AppSheet | Apps Script (Native) | Client-Side (ExcelJS) | Cloud Functions (Python) |

|---|---|---|---|---|

| Complexity | Very Low | Low | High | High |

| Cost | Free | Free | Free | GCP Usage (Low) |

| Best For | Standard Workflows | Simple Reports | Interactive Template Fills | Enterprise Reports |

Final Thoughts

There is no “one size fits all” here. The “Excel Requirement” is a spectrum:

- For quick internal tools: Stick to Method 1 (AppSheet) and Method 2 (Native). It’s fast to build and free.

- For polished client reports: Method 3 (ExcelJS) is your best friend. It keeps the heavy lifting in the browser and preserves your branding.

- For enterprise scale: If you are generating hundreds of invoices a week, Method 4 (Cloud Functions) is the robust choice. It decouples the generation logic from the spreadsheet UI.

Choose the architecture that fits your volume and your “pixel-perfect” tolerance. I’m also keen to hear have got another ‘Excel Generator’ approach? Happy scripting!

Member of Google Developers Experts Program for Google Workspace (Google Apps Script) and interested in supporting Google Workspace Devs.